The Missing Layer

Identity tells you who someone is. Policy tells you what they're allowed to do. Neither tells you what they've actually done. That's the gap.

Same credentials. Same permissions. Different risk.

Every entity in your environment passes the same two checks: identity verification and policy authorization. But those checks return identical results for entities with fundamentally different risk profiles.

| Identity Layer Who they are |

Policy Layer What they can do |

Participation History What they've done |

|

|---|---|---|---|

| New HireHuman | ✓ Valid | ✓ Authorized | ✗ Tier 0 No history |

| 10-Year EmployeeHuman | ✓ Valid | ✓ Authorized | ✓ Tier 3 200+ events, 90+ days |

| New AI AgentService | ✓ Valid key | ✓ Authorized | ✗ Tier 0 No history |

| Established AgentService | ✓ Valid key | ✓ Authorized | ✓ Tier 2 50+ events, 30+ days |

What changes at the point of decision

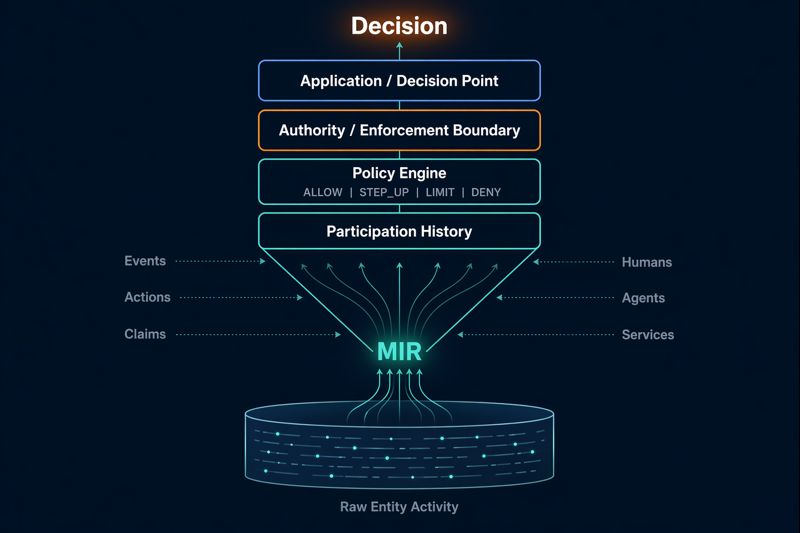

When a high-stakes action is requested, MIR's policy engine checks the entity's accumulated participation history and returns a recommendation: ALLOW, STEP_UP, LIMIT, or DENY.

10-Year Employee

New Hire

Established Agent

New AI Agent

What MIR is

MIR is participation history infrastructure. It records what entities have actually done over time and surfaces that history as a signal at the point of decision. It is not an identity provider, a policy engine, or a scoring system. It is the evidence layer that sits underneath your existing authorization stack.

- Deterministic, not ML. No models, no scores, no opacity. Tier thresholds are event count + history age.

- Recommendations, not decisions. MIR returns ALLOW, STEP_UP, LIMIT, or DENY. Your system decides what to do with it.

- No PII stored. External IDs are SHA256 hashed. Events carry no free-form metadata.

- Neutral. MIR is infrastructure, not a platform. It serves the entities it tracks, not just the organizations that submit events.

Where MIR sits in the stack

Who uses this

Security Teams

Before granting elevated access, check the requester's behavioral track record. Reduce blind trust in new identities.

AI/Agent Governance

An agent spawned 5 minutes ago and one operating for 90 days are not the same risk. Your system should know the difference.

Compliance

Auditable, timestamped record of what entities actually did. The kind of evidence regulators want to see.

Fraud Prevention

When a transaction triggers a review, see the entity's full behavioral history instantly. Pattern recognition at the point of decision.

See it in action

Try the sandbox with a public API key. No account needed. Or start a 14-day free trial.

Try the Sandbox Start Free Trial